From Office 365 to Azure Event Grid, the events must Flow

/Photo by Archana More on Unsplash

In this blog post, we capture all the events across an Office 365 Tenant from multiple event sources, gather them, and send them through an Azure Event Grid.

We then listen, filter and handle our events in a central, unified way.

The events must Flow.

This is also the full write up of this microblog posted to Twitter #FlowNinja earlier this month.

Plan

What benefit do we get from this?

Listen to every event across an Office 365 Tenant

Construct a uniform event message

Send them into a Serverless Event Solution - Azure Event Grid

Filter and catch our events

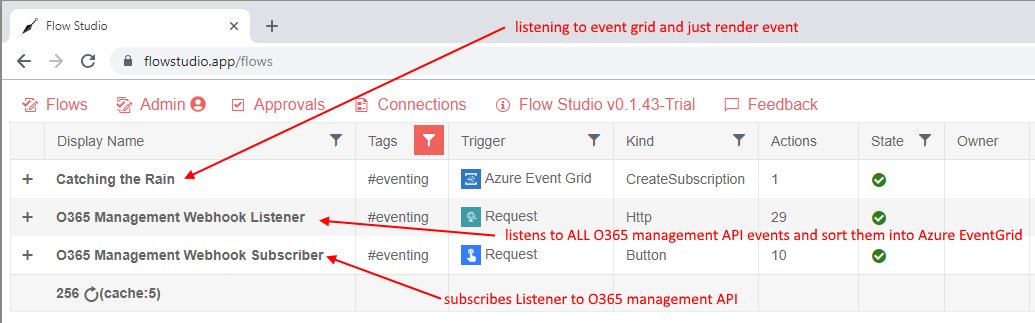

We want to build 3 Flows

What benefit do we get from this?

First, we see the increasing availability of event hooks - we have subscriptions, delta queries, or webhooks, across various different products in Office 365. Some products like SharePoint is getting a SocketIO webhook. There will be more events, and our event handling design must evolve.

Second, we see the cost-effective solutions to handle these ever increasing flood of events in the form of Serverless compute. This is true with Azure Functions, Microsoft Flow or Azure Logic Apps.

We end up with a lot of individual event sources and a lot of individual event receivers. This is a common event handling problem. As number of events we handle increases, the worse the event management problem becomes.

Consider you have a “handle a document uploaded to a library” event - a very typical SharePoint Workflow. Now consider this library is cloned to a hundred project sites.

If we clone the event handler a hundred times, we have a problem.

If you have already done this with Flow, then try https://FlowStudio.app to help you manage them.

Ooh inline product placement!

If you are a developer, then consider this scenario.

Consider a typical event handling in the browser. A decade ago we used jQuery like this:

// 2008 $('button').click(handler); // 2018 $(global).on('click', 'button', handler);

And gradually we find that unacceptable, because we have buttons, events everywhere, and managing individual event hooks was tedious and error prone. Eventually, we moved to a global handler model, and we filter just prior to event being raised.

The headache-less way to handle events is to set the hooks all at the global root level, and then filter by the event source and event type.

That is the exact reason we need Azure Event Grid.

Centrally manage our events

Decouple the event source from event handlers

Stay sane, with a hard problem

Listen to every event across an Office 365 Tenant

I had previously wrote about listening to Office 365 Management API via the HTTP action and app-only permissions. What I did not realize was that the Office 365 Management API also have fantastic webhooks.

I read about the webhooks from Kent Weare’s post, where he uses this event to get a trigger when Flows are created in the tenant.

https://flow.microsoft.com/en-us/blog/automate-flow-governance/

These are grouped into several categories: AzureAD, Exchange, SharePoint and General (other).

This is the subscriber. One picture of 4 blocks. I’m subscribing to three webhooks at once.

The Office 365 Management API is a fantastic general event source. The downside is that it’s not instant - event handler is called between 10-20minutes after the actual event. So it is great as an audit webhook, or for scheduling files or search or to signal for a bot to re-scan a document. But it’s not an instant webhook.

Of course, if our goal is to send events into an Event Grid - we can work with multiple event sources at the same time. We can add Microsoft Graph events or subscribe to SharePoint list webhooks directly.

This is the top of the Listener

Construct a uniform event message

The event grid has a event JSON structure, it also supports a CloudEvent structure.

When I built my implementation, the Event Grid connector is still in preview and I had troubles publishing a Cloud Event structure. I assume this wouldn’t be a problem anymore as the connector evolves.

This is the final loop design - all done.

Remember, we are running Serverless so abuse/utilize every opportunity to use as many Azure Servers as you can - if you can fan-out to parallelism you must.

Don’t talk to each individual HTTP action one at a time. Do (up to 50) all at the same time.

Send them into a Serverless Event solution - Azure Event Grid

The Azure Event Grid is a serverless event processing pipeline. It decouples our event source(s) from our event handlers.

Here is our first handler.

This catches every event on the Event Grid - in Event Grid, we see we have our first webhook attached - it appears as a LogicApps webhook.

Here are three examples of what it caught:

Flow created Event

Site Collection created Event

File uploaded Event

Filter and catch our events

We see the very specific webhook now registered on the event grid - and the filters are also listed

PPTX filter only runs when the file I’ve uploaded is a PowerPoint file.

Summary

I have been talking a lot about Serverless and how our tools and design must evolve. Having a unique Office 365 to Event Grid solution is something I talked about as far back as 2017. I’m glad a year later I’ve finally got a great prototype going.

Office 365 Management API is a great webhook source that catches all sorts of events. The downside is that it is an audit webhook, so the delay may not be acceptable to your needs.

Using Azure Event Grid to perform filtering and subscription gives us the unique ability to see EVERYTHING that’s going on in our tenant. That has tremendous value.

Because event source and event handling is now decoupled - we can add new event sources to push to the same Azure Event Grid. We can do this from Microsoft Graph, we can do this from SharePoint, or we can do this from a whole myriad of triggers available in Microsoft Flow

We can write Azure Functions to trigger off the Event Grid, and it would be visible as well.

I was reading and appreciating sending events to the new Azure SignalR service from Azure Functions - that would be pretty amazing to convert an event grid message into a websocket event.

https://twitter.com/nthonyChu/status/1044427579460145152

The possibilities are endless. Our tools and our design must evolve.