How to get live FIFA Worldcup results via Microsoft Flow into your SharePoint Intranet WebPart

/Github tweeted a link to a NPM NodeJS CLI project that uses data from http://football-data.org. Seeing that, I decided we need to build a SharePoint Modern SPFx webpart so we can load it into all our Intranets.

Plan

- Use Microsoft Flow to call the football-data API

- A Thesis in Microsoft Flow's Parse JSON action

- Format the data and write it into a HTML segment in SharePoint

- Build a simple SPFx webpart that will load the HTML segment into the DOM

- Future: Using Events API

- Future: Using API Key

- Downloads

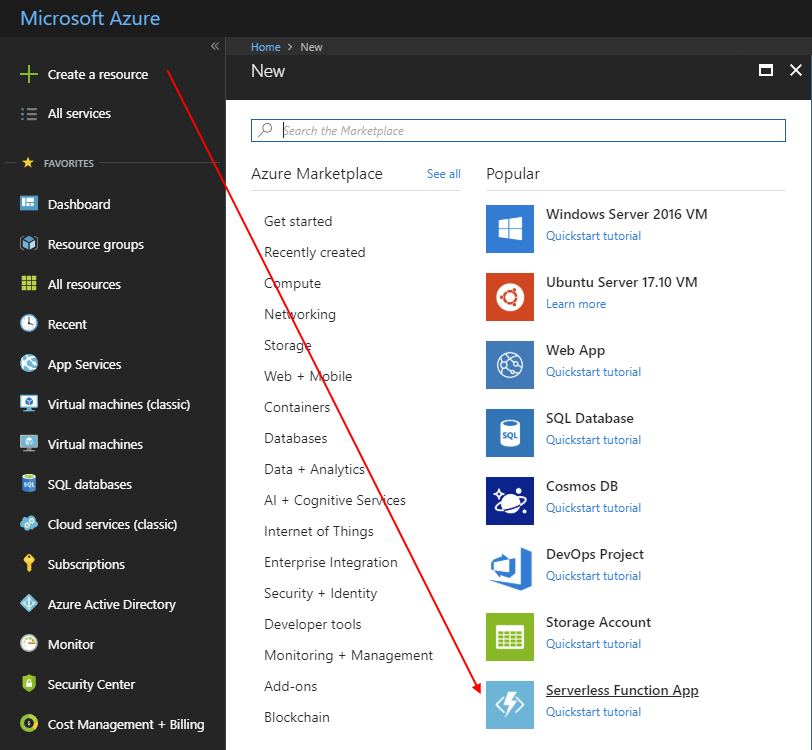

Use Microsoft Flow to call the football-data API

We want to build this:

First let's look at the data source API.

That's nice - we don't need to figure out which competition is the 2018 World Cup. It's 467.

http://api.football-data.org/v1/competitions/467

We want the game fixtures though, so we will call

http://api.football-data.org/v1/competitions/467/fixtures

A Thesis in Microsoft Flow's Parse JSON action

To make the Select Operation Easier, we have two choices:

- "type the expressions manually" or

- "configure Parse JSON to make our lives easier"

Parse JSON is a detailed exercise that requires a full post by itself, since it requires description of various problems that we'll see, and how to get around them. The Parse JSON step is the next blog post.

http://johnliu.net/blog/2018/6/a-thesis-on-the-parse-json-action-in-microsoft-flow

Format the data and write it into a HTML segment in SharePoint

If we skipped Parse JSON - then we'll need to manually type out the expressions.

We are selecting From:

body('HTTP'_world-cup-fixtures')?['fixtures']

And we need the properties:

date: convertFromUtc(item()?['date'], 'AUS Eastern Standard Time')

Day: item()['matchday']

Team 1: item()['homeTeamName']

Team 2: item()['awayTeamName']

Team 1 Goals: item()['result']['goalsHomeTeam']

Team 2 Goals: item()['result']['goalsAwayTeam']

Status: item()['status']

If we used Parse JSON - then the step to select the properties are easier.

We do the work in Parse JSON to describe what the types of the properties are - this allows the dynamic content pane to be more intelligent in showing us the appropriate choices.

Still have to type out the date conversion to local-time, unless you want UTC time.

We have our nice HTML table now.

Build a simple SPFx webpart that will load the HTML segment into the DOM

For this step, I cheated and just used Mikael Svenson's wonderful Modern Script Editor webpart

Then I pasted in this code:

<div id="fifa-games"></div> <script> window.fetch('/Shared%20Documents/fifa-2018-fixtures.html', { credentials: "same-origin" }).then(function(response){ return response.text(); }).then(function(text) { document.getElementById('fifa-games').innerHTML = text; }); </script>

Basically, fetch the contents of the HTML from fifa-2018-fixtures.html and write that into DIV#fifa-games element.

You can also just use the old Content Editor webpart and use Content Link property to the file, but that doesn't work on the new modern pages.

Results

Works really well in Microsoft Teams too.

Future: Using Events API

football-data.org has an Events API where they will call our callback URL when a team scores in a game fixture.

http://api.football-data.org/event_api

This requires a first Flow to loop through all the Fixtures in the FIFA 2018 competition and set up an event call for each fixture. Then when those games are played - if any goals are scored - we can call the same parent Flow to immediately update the result HTML table.

This implementation won't require a scheduled recurrence to refresh the data daily (or hourly). It can be refreshed on-demand!

Future: Using API Key

When calling the football-data.org API without an API-Key, there is a max rate limit of 50 calls per day. We can sort of see this in the response header (it goes down by 2 per execution).

Now because this is running from a Microsoft Flow server, I have no idea if your calls are rate-limited by the same IP address as someone else running this in your Azure Data Centre region. So if you do see rate limit, it is better to register an API Key with an email address, and then add that to the HTTP Request Header.

Downloads

Downloads are available on https://github.com/johnnliu/flow

Flow Export: https://github.com/johnnliu/flow/blob/master/Get-FIFA-2018_20180626144356.zip

Script Editor Snippet: https://github.com/johnnliu/flow/blob/master/get-fifa-2018-scripteditor.txt