One Connection to Proxy Them All - Microsoft Flow with Azure Functions Proxies

/Office 365 licensed PowerApps and Microsoft Flow lets you have 1 custom connection. I think that's cute. I also think that limitation is absurd, considering how many times we need to break out of existing capabilities every time we want to do something interesting. So, custom connections shouldn't be a limit, if the BAP team wants us to innovate the crap out of this platform, this is counter productive. I hope the team reconsider.

Regardless, it is pointless, because we bypass that right away: Azure Functions has Proxies.

This is a post in a series on Microsoft Flow.

- JSON cheatsheet for Microsoft Flow

- Nested-Flow / Reusable-Function cheatsheet for Microsoft Flow

- Building non-JSON webservices with Flow

- One Connection to Proxy Them All - Microsoft Flow with Azure Functions Proxies

- Building Binary output service with Microsoft Flow

In this post, we talk about:

- Azure Functions proxy for Microsoft Flow connections

- Azure Functions proxy for itself

- Azure Functions proxy for bypassing CORS

- Azure Functions proxy for 3rd party APIs

- One Simple API for me

So in one Connection to proxy them all, in one Request to Bind them. And in Serverless something about we pay nothing - hey I'm a blogger not a poet.

Azure Functions Proxy for Microsoft Flow

In an earlier post - I talk about we can turn Microsoft Flow into webservices with the Request trigger. This is awesome, but it leaves you with an endpoint that looks like sin.

https://prod-18.australiasoutheast.logic.azure.com:443/workflows/76232c77d84d424b8e56ab2f88b672c4 /triggers/manual/paths/invoke?api-version=2016-06-01 &sp=%2Ftriggers%2Fmanual%2Frun &sv=1.0 &sig=FAKE_KEY_NXpUV_FEyhl1BKgKftQ0-rcOPcE

Lets clean this up with Azure Functions Proxies. First, enable it.

Create a Proxy: set the name to "uploadBinary" and template to "upload". Chose your methods (POST only for this one), and paste in the backend URL.

Hit create - our proxy URL is generated. https://funky-demo.../upload

We had a Swagger OpenAPI file for this binary web request - lets tidy it up

{

"swagger": "2.0",

"info": {

"description": "",

"version": "1.0.0",

"title": "UploadBinary-proxy"

},

"host": "funky-demo.azurewebsites.net",

"basePath": "/",

"schemes": [

"https"

],

"paths": {

"upload": {

"post": {

"summary": "uploads an image",

"description": "",

"operationId": "uploadFile",

"consumes": [

"multipart/form-data"

],

"parameters": [

{

"name": "file",

"in": "formData",

"description": "file to upload",

"required": true,

"type": "file"

}

],

"responses": {

"200": {

"description": "successful operation"

}

}

}

}

}

}We can delete all the additional parameters sp, sv, sig, api-version. We pretty much say we just care about the formData.

Go back to our PowerApps custom connectors - delete the old one and add this new OpenAPI file.

This is what PowerApps now see: the URL is only /upload

and there are no hidden query parameters - just waiting for the body.

Delete the old connection and recreate a new connection.

The method now on the new connection only takes 1 parameter "file".

The result in PowerApps and SharePoint

This test picture is called "Azure Function is On Fire!"

So in using AzureFunctions Proxy for a Flow WebRequest - we've simplified the query string in the URL and kept the functionality.

Azure Functions proxy for Azure Functions

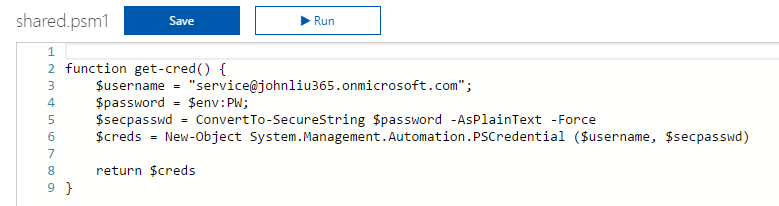

Some of our connections were to Azure Functions, so let's tidy that up too.

This is a pretty simple example. /listEmail now is hooked up, and activation code is hidden.

Azure Functions proxy for bypassing CORS

Everything you proxy in Azure Functions can be made available to CORS - just need to turn on Azure Functions CORS with your domain

CORS' design goals are to prevent browser based injection/consumption of a service. But services are made to be consumed. So CORS blocks a browser, but never blocks a server-based call.

Azure Function runs server, and proxy works because it doesn't see CORS at all. Switching on Azure Functions CORS is so that your domain is accepted to call this Azure Function.

Anyway, if you see CORS and got annoyed - just use Azure Functions Proxy to keep going.

Azure Functions proxy for 3rd party APIs

Let's add an external Open Weather API

Azure Functions Proxies has additional means of passing through parameters to the underlying proxied URL. See Documentation: https://docs.microsoft.com/en-us/azure/azure-functions/functions-proxies

So the call to the SAMPLE open weather API is:

http://samples.openweathermap.org/data/2.5/weather

?lat={request.querystring.lat}

&lon={request.querystring.lon}

&appid=b1b15e88fa797225412429c1c50c122a1So we are exposing two query string parameters in our end point to be passed down.

One simple API Connection for me

{

"swagger": "2.0",

"info": {

"description": "",

"version": "1.0.0",

"title": "One-Funky-API"

},

"host": "funky-demo.azurewebsites.net",

"basePath": "/",

"schemes": [

"https"

],

"paths": {

"upload": {

...

},

"listEmail": {

...

},

"weather": {

...

}

}

}In all the examples, we clean up the API we are calling to hide away apiKeys and such and make our end point really easy to consume from Flow, PowerApps or JavaScript CORS.

And because both Microsoft Flow and Azure Functions are Serverless, we still don't care about servers ;-)